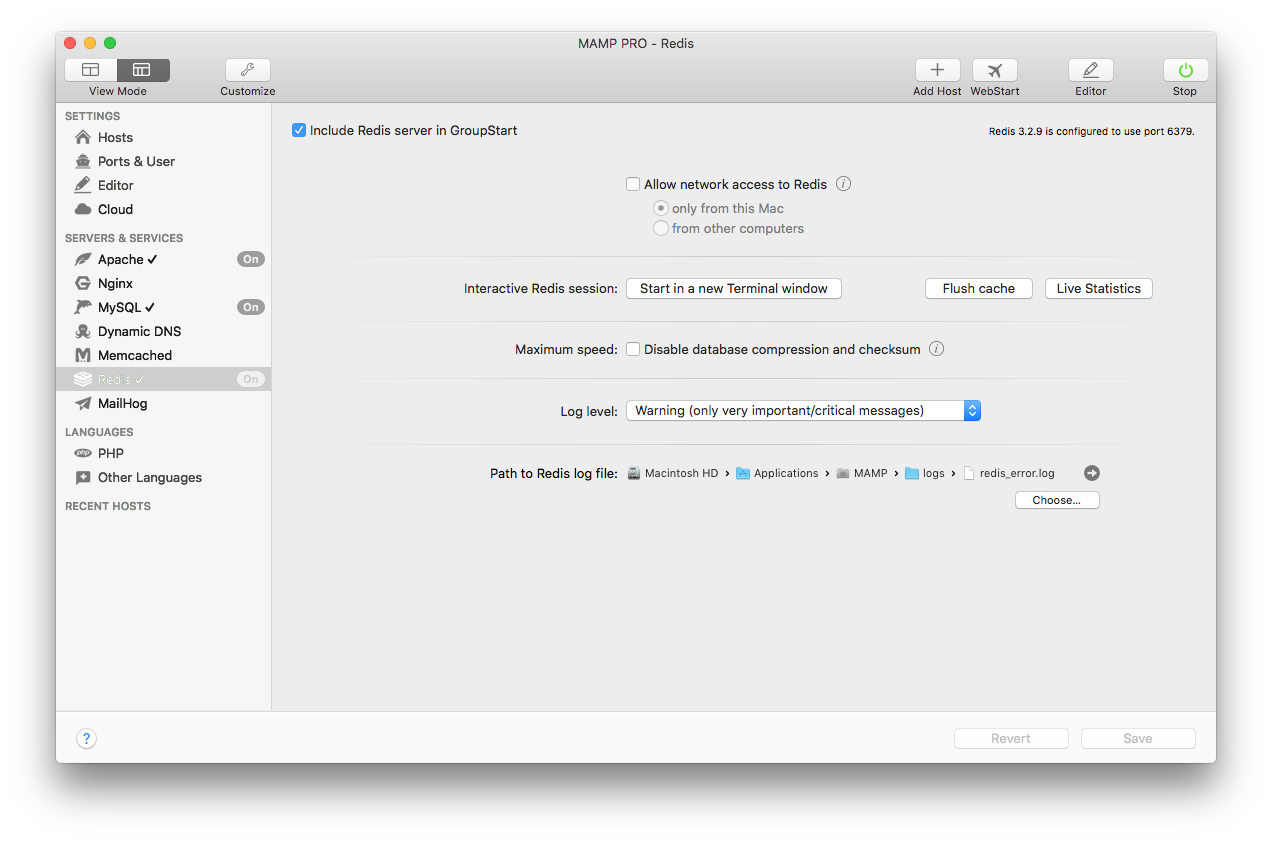

The MAMP app worked pretty well. But it has one major flaw: all apps share the same PHP configuration. And the same database server and cache service (like Redis), if used by the application. Docker creates isolated container for each application in which only the application runs and has access to. If you want quick results just wait a few months for MAMP PRO to include a new version of PHP. It will save you time, money and frustration (and keep you blissfully unaware of the gruesome process of compiling software from scratch). Proceed at your own risk and peril. MAMP PRO Mac MAMP PRO Windows MAMP Mac & Windows; Number of hosts: unlimited: unlimited: 1: Webserver: Apache, Nginx. Redis: Postfix / SMTP configuration. These are just a few of the new features and improvements in It. Other new features include support for MySQL 5.7, integration of Redis caching server, remote editing of the editor, redesigned toolbar, optimized host creation dialog and more. Note: MAMP PRO is 14 days trial version. MAMP can be used at no cost. Also Available: Download MAMP for Mac. In case this cookie is set, Apache routes the request to the 'debug' instance instead via some clever modproxyfcgi directives. With external object caching, like Redis, this actually works out all right. Interpreter instance specific things like APCu, probably not so well.

- # Note on units: when memory size is needed, it is possible to specifiy

- #

- # 1kb => 1024 bytes

- # 1mb => 1024*1024 bytes

- # 1gb => 1024*1024*1024 bytes

- # units are case insensitive so 1GB 1Gb 1gB are all the same.

- # By default Redis does not run as a daemon. Use 'yes' if you need it.

- # Note that Redis will write a pid file in /var/run/redis.pid when daemonized.

- # When running daemonized, Redis writes a pid file in /var/run/redis.pid by

- # default. You can specify a custom pid file location here.

- # Accept connections on the specified port, default is 6379.

- # If port 0 is specified Redis will not listen on a TCP socket.

- # If you want you can bind a single interface, if the bind option is not

- # specified all the interfaces will listen for incoming connections.

- # bind 127.0.0.1

- # Specify the path for the unix socket that will be used to listen for

- # incoming connections. There is no default, so Redis will not listen

- #

- # Close the connection after a client is idle for N seconds (0 to disable)

- # it can be one of:

- # debug (a lot of information, useful for development/testing)

- # verbose (many rarely useful info, but not a mess like the debug level)

- # notice (moderately verbose, what you want in production probably)

- # warning (only very important / critical messages are logged)

- # Specify the log file name. Also 'stdout' can be used to force

- # Redis to log on the standard output. Note that if you use standard

- # output for logging but daemonize, logs will be sent to /dev/null

- # To enable logging to the system logger, just set 'syslog-enabled' to yes,

- # and optionally update the other syslog parameters to suit your needs.

- syslog-ident redis

- # Specify the syslog facility. Must be USER or between LOCAL0-LOCAL7.

- # Set the number of databases. The default database is DB 0, you can select

- # a different one on a per-connection basis using SELECT <dbid> where

- databases 16

- ################################ SNAPSHOTTING #################################

- # Save the DB on disk:

- # save <seconds> <changes>

- # Will save the DB if both the given number of seconds and the given

- # number of write operations against the DB occurred.

- # In the example below the behaviour will be to save:

- # after 900 sec (15 min) if at least 1 key changed

- # after 300 sec (5 min) if at least 10 keys changed

- #

- # Note: you can disable saving at all commenting all the 'save' lines.

- save 9001

- save 6010000

- # Compress string objects using LZF when dump .rdb databases?

- # For default that's set to 'yes' as it's almost always a win.

- # If you want to save some CPU in the saving child set it to 'no' but

- # the dataset will likely be bigger if you have compressible values or keys.

- dbfilename dump.rdb

- # The working directory.

- # The DB will be written inside this directory, with the filename specified

- # above using the 'dbfilename' configuration directive.

- # Also the Append Only File will be created inside this directory.

- # Note that you must specify a directory here, not a file name.

- ################################# REPLICATION #################################

- # Master-Slave replication. Use slaveof to make a Redis instance a copy of

- # another Redis server. Note that the configuration is local to the slave

- # so for example it is possible to configure the slave to save the DB with a

- # different interval, or to listen to another port, and so on.

- # slaveof <masterip> <masterport>

- # If the master is password protected (using the 'requirepass' configuration

- # directive below) it is possible to tell the slave to authenticate before

- # starting the replication synchronization process, otherwise the master will

- #

- # When a slave lost the connection with the master, or when the replication

- # is still in progress, the slave can act in two different ways:

- # 1) if slave-serve-stale-data is set to 'yes' (the default) the slave will

- # still reply to client requests, possibly with out of data data, or the

- # data set may just be empty if this is the first synchronization.

- # 2) if slave-serve-stale data is set to 'no' the slave will reply with

- # an error 'SYNC with master in progress' to all the kind of commands

- #

- ################################## SECURITY ###################################

- # Require clients to issue AUTH <PASSWORD> before processing any other

- # commands. This might be useful in environments in which you do not trust

- # others with access to the host running redis-server.

- # This should stay commented out for backward compatibility and because most

- # people do not need auth (e.g. they run their own servers).

- # Warning: since Redis is pretty fast an outside user can try up to

- # 150k passwords per second against a good box. This means that you should

- # use a very strong password otherwise it will be very easy to break.

- # requirepass foobared

- # Command renaming.

- # It is possilbe to change the name of dangerous commands in a shared

- # environment. For instance the CONFIG command may be renamed into something

- # of hard to guess so that it will be still available for internal-use

- #

- #

- # rename-command CONFIG b840fc02d524045429941cc15f59e41cb7be6c52

- # It is also possilbe to completely kill a command renaming it into

- #

- ################################### LIMITS ####################################

- # Set the max number of connected clients at the same time. By default there

- # is no limit, and it's up to the number of file descriptors the Redis process

- # is able to open. The special value '0' means no limits.

- # Once the limit is reached Redis will close all the new connections sending

- #

- # Don't use more memory than the specified amount of bytes.

- # When the memory limit is reached Redis will try to remove keys with an

- # EXPIRE set. It will try to start freeing keys that are going to expire

- # in little time and preserve keys with a longer time to live.

- # Redis will also try to remove objects from free lists if possible.

- # If all this fails, Redis will start to reply with errors to commands

- # that will use more memory, like SET, LPUSH, and so on, and will continue

- #

- # WARNING: maxmemory can be a good idea mainly if you want to use Redis as a

- # 'state' server or cache, not as a real DB. When Redis is used as a real

- # database the memory usage will grow over the weeks, it will be obvious if

- # it is going to use too much memory in the long run, and you'll have the time

- # to upgrade. With maxmemory after the limit is reached you'll start to get

- # errors for write operations, and this may even lead to DB inconsistency.

- # maxmemory <bytes>

- # MAXMEMORY POLICY: how Redis will select what to remove when maxmemory

- #

- # volatile-lru -> remove the key with an expire set using an LRU algorithm

- # allkeys-lru -> remove any key accordingly to the LRU algorithm

- # volatile-random -> remove a random key with an expire set

- # volatile-ttl -> remove the key with the nearest expire time (minor TTL)

- # noeviction -> don't expire at all, just return an error on write operations

- # Note: with all the kind of policies, Redis will return an error on write

- # operations, when there are not suitable keys for eviction.

- # At the date of writing this commands are: set setnx setex append

- # incr decr rpush lpush rpushx lpushx linsert lset rpoplpush sadd

- # sinter sinterstore sunion sunionstore sdiff sdiffstore zadd zincrby

- # zunionstore zinterstore hset hsetnx hmset hincrby incrby decrby

- #

- #

- # LRU and minimal TTL algorithms are not precise algorithms but approximated

- # algorithms (in order to save memory), so you can select as well the sample

- # size to check. For instance for default Redis will check three keys and

- # pick the one that was used less recently, you can change the sample size

- #

- ############################## APPEND ONLY MODE ###############################

- # By default Redis asynchronously dumps the dataset on disk. If you can live

- # with the idea that the latest records will be lost if something like a crash

- # happens this is the preferred way to run Redis. If instead you care a lot

- # about your data and don't want to that a single record can get lost you should

- # enable the append only mode: when this mode is enabled Redis will append

- # every write operation received in the file appendonly.aof. This file will

- # be read on startup in order to rebuild the full dataset in memory.

- # Note that you can have both the async dumps and the append only file if you

- # like (you have to comment the 'save' statements above to disable the dumps).

- # Still if append only mode is enabled Redis will load the data from the

- #

- # IMPORTANT: Check the BGREWRITEAOF to check how to rewrite the append

- # The name of the append only file (default: 'appendonly.aof')

- # The fsync() call tells the Operating System to actually write data on disk

- # instead to wait for more data in the output buffer. Some OS will really flush

- # data on disk, some other OS will just try to do it ASAP.

- # Redis supports three different modes:

- # no: don't fsync, just let the OS flush the data when it wants. Faster.

- # always: fsync after every write to the append only log . Slow, Safest.

- # everysec: fsync only if one second passed since the last fsync. Compromise.

- # The default is 'everysec' that's usually the right compromise between

- # speed and data safety. It's up to you to understand if you can relax this to

- # 'no' that will will let the operating system flush the output buffer when

- # it wants, for better performances (but if you can live with the idea of

- # some data loss consider the default persistence mode that's snapshotting),

- # or on the contrary, use 'always' that's very slow but a bit safer than

- #

- appendfsync everysec

- # When the AOF fsync policy is set to always or everysec, and a background

- # saving process (a background save or AOF log background rewriting) is

- # performing a lot of I/O against the disk, in some Linux configurations

- # Redis may block too long on the fsync() call. Note that there is no fix for

- # this currently, as even performing fsync in a different thread will block

- #

- # In order to mitigate this problem it's possible to use the following option

- # that will prevent fsync() from being called in the main process while a

- #

- # This means that while another child is saving the durability of Redis is

- # the same as 'appendfsync none', that in pratical terms means that it is

- # possible to lost up to 30 seconds of log in the worst scenario (with the

- #

- # If you have latency problems turn this to 'yes'. Otherwise leave it as

- # 'no' that is the safest pick from the point of view of durability.

- ################################ VIRTUAL MEMORY ###############################

- # Virtual Memory allows Redis to work with datasets bigger than the actual

- # amount of RAM needed to hold the whole dataset in memory.

- # In order to do so very used keys are taken in memory while the other keys

- # are swapped into a swap file, similarly to what operating systems do

- #

- # To enable VM just set 'vm-enabled' to yes, and set the following three

- # vm-enabled yes

- # This is the path of the Redis swap file. As you can guess, swap files

- # can't be shared by different Redis instances, so make sure to use a swap

- # file for every redis process you are running. Redis will complain if the

- #

- # The best kind of storage for the Redis swap file (that's accessed at random)

- #

- # *** WARNING *** if you are using a shared hosting the default of putting

- # the swap file under /tmp is not secure. Create a dir with access granted

- # only to Redis user and configure Redis to create the swap file there.

- # vm-max-memory configures the VM to use at max the specified amount of

- # RAM. Everything that deos not fit will be swapped on disk *if* possible, that

- # is, if there is still enough contiguous space in the swap file.

- # With vm-max-memory 0 the system will swap everything it can. Not a good

- # default, just specify the max amount of RAM you can in bytes, but it's

- # better to leave some margin. For instance specify an amount of RAM

- # that's more or less between 60 and 80% of your free RAM.

- # Redis swap files is split into pages. An object can be saved using multiple

- # contiguous pages, but pages can't be shared between different objects.

- # So if your page is too big, small objects swapped out on disk will waste

- # a lot of space. If you page is too small, there is less space in the swap

- # file (assuming you configured the same number of total swap file pages).

- # If you use a lot of small objects, use a page size of 64 or 32 bytes.

- # If you use a lot of big objects, use a bigger page size.

- vm-page-size 32

- # Number of total memory pages in the swap file.

- # Given that the page table (a bitmap of free/used pages) is taken in memory,

- # every 8 pages on disk will consume 1 byte of RAM.

- # The total swap size is vm-page-size * vm-pages

- # With the default of 32-bytes memory pages and 134217728 pages Redis will

- # use a 4 GB swap file, that will use 16 MB of RAM for the page table.

- # It's better to use the smallest acceptable value for your application,

- # but the default is large in order to work in most conditions.

- # Max number of VM I/O threads running at the same time.

- # This threads are used to read/write data from/to swap file, since they

- # also encode and decode objects from disk to memory or the reverse, a bigger

- # number of threads can help with big objects even if they can't help with

- # I/O itself as the physical device may not be able to couple with many

- #

- # The special value of 0 turn off threaded I/O and enables the blocking

- vm-max-threads 4

- ############################### ADVANCED CONFIG ###############################

- # Hashes are encoded in a special way (much more memory efficient) when they

- # have at max a given numer of elements, and the biggest element does not

- # exceed a given threshold. You can configure this limits with the following

- hash-max-zipmap-entries 512

- # Similarly to hashes, small lists are also encoded in a special way in order

- # to save a lot of space. The special representation is only used when

- list-max-ziplist-entries 512

- # Sets have a special encoding in just one case: when a set is composed

- # of just strings that happens to be integers in radix 10 in the range

- # The following configuration setting sets the limit in the size of the

- # set in order to use this special memory saving encoding.

- # Active rehashing uses 1 millisecond every 100 milliseconds of CPU time in

- # order to help rehashing the main Redis hash table (the one mapping top-level

- # keys to values). The hash table implementation redis uses (see dict.c)

- # performs a lazy rehashing: the more operation you run into an hash table

- # that is rhashing, the more rehashing 'steps' are performed, so if the

- # server is idle the rehashing is never complete and some more memory is used

- #

- # The default is to use this millisecond 10 times every second in order to

- # active rehashing the main dictionaries, freeing memory when possible.

- # If unsure:

- # use 'activerehashing no' if you have hard latency requirements and it is

- # not a good thing in your environment that Redis can reply form time to time

- #

- # use 'activerehashing yes' if you don't have such hard requirements but

- activerehashing yes

- ################################## INCLUDES ###################################

- # Include one or more other config files here. This is useful if you

- # have a standard template that goes to all redis server but also need

- # to customize a few per-server settings. Include files can include

- #

- # include /path/to/other.conf

Just recently I wanted to install Redis and the fast, because written in C, PHP extension PHPRedis on my Macbook Pro.

I learned, that I would need to recompile my PHP and decided to switch from the convenient, but not really extendable MAMP.app, to the more Linux-alike (apt get …) Macports. At the end of this article I will post some further links relating Getting-Started with Macports. I assume that you correctly installed Apache 2 and PHP 5.3.5 with Macports.

Installation of Redis Server

Head to your terminal and type the following:

sudo port selfupdate

sudo port upgrade outdated

sudo port install redis

You now installed the Redis Server! To run Redis type

sudo port install redis

… and to stop it type

sudo killall redis-server

Mamp Pro Php Redis

Installation of the PHP Extension

At first, we create a new directory where we put all the source code inside, then we get the latest code via GIT and then we build it for PHP. To make it easy, just copy & paste the following lines to your terminal:

cd /usr/local

sudo mkdir src

cd /usr/local/src

sudo mkdir phpredis-build

cd phpredis-build

sudo git clone --depth 1 git://github.com/owlient/phpredis.git

cd phpredis

sudo phpize

sudo ./configure

sudo make

sudo make install

Now just create the necessary ini-file for the extension:

cd /opt/local/var/db/php5/

sudo nano redis.ini

add the line: extension = redis.so into the file and save it

Now just restart your Apache and you´re ready to go!

Mamp Pro Discount

Links for the installation of Macports:

https://trac.macports.org/wiki/howto/MAMP

http://blogwaffe.com/2010/01/22/php-5-apache-2-mysql-5-on-os-x-via-macports/